Will artificial intelligence (AI) replace sports practitioners?

- Kevin Yven and Asker Jeukendrup

- Mar 21

- 5 min read

The emergence of artificial intelligence (AI) in sport has not only transformed workflows; it has triggered an emotional response. Excitement, curiosity, scepticism and fear coexist in equal measure. Among nutritionists, coaches, sport scientists and performance staff, one question has come to dominate the discussion: "Will AI replace sports practitioners?". The question is understandable. When software can automatically generate fuelling plans, detect performance trends or summarise research in seconds, it becomes natural to wonder where the human expert fits in.

To answer this meaningfully, it is necessary to move beyond speculation and discuss what AI does exceptionally well, and what it fundamentally cannot do. This is not a story of machines versus professionals; it is a story of how the nature of professional practice is changing.

What AI does better than humans

There is no advantage in pretending that humans outperform AI everywhere. On the contrary, identifying where AI is superior helps practitioners decide how to use it strategically rather than defensively.

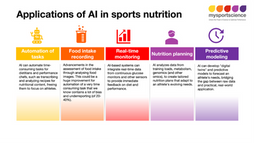

AI’s core strengths include:

Scale: evaluating patterns across datasets too large for human cognition.

Speed: performing calculations and interpretations instantly.

Consistency: never fatigued, distracted or rushed.

Breadth of knowledge: access to vast pools of information and memory.

When questions have black-and-white answers and the correct response can be retrieved from data patterns, AI has a structural advantage. Similarly, in areas of sport where prediction is driven by well-understood metrics, such as energy expenditure in cycling or time-in-range analysis from continuous glucose monitoring, models can support decision-making at a level of efficiency no individual could match manually.

The mistake is not acknowledging AI’s strengths. The mistake is believing that recognising patterns is the same as understanding their meaning.

Where AI fails and why it matters

AI struggles most in the exact places where practitioners earn their value: nuance, uncertainty and context. The limitations are not subtle, they are structural.

AI cannot interpret the psychosocial world of athletes

A fuelling plan is irrelevant if an athlete is stressed, unmotivated, homesick or anxious about body weight. A hydration strategy can fail if an athlete dislikes the flavour. No algorithm can measure self-doubt, cultural food norms, team dynamics and many factors that influence human behaviour.

AI cannot recognise when the question is wrong

AI will provide and answer, but sometimes the practitioner’s job is not to produce an answer, but to challenge the request. For example, should an athlete really lose weight during a heavy training block? Or should a team introduce a supplement simply because it is new?

AI cannot refuse a flawed premise. Most large language models (LLMs), like ChatGPT, Gemini, Claude, etc. are designed to answer the question given, even when the question itself is wrong, misleading, or based on false information.

AI cannot understand ethics or consequences

Nutrition advice can impact health, weight, psychology and performance. Coaching decisions influence careers. AI has no concept of harm.

AI cannot declare uncertainty

In science, uncertainty is honesty. In AI, uncertainty is impossible. The model will always produce an answer even when the correct answer is “it depends,” “we do not know,” or “more information is required.”

These limitations are not temporary bugs awaiting future updates. They reflect an absence of consciousness and accountability, the cornerstones of professional practice.

The real risk is not AI itself

The most dangerous future for sport is not one in which AI becomes too powerful. It is one in which practitioners assume that AI is more reliable than it is. When athletes receive nutrition recommendations from a chatbot, or when coaches rely on automated scores without understanding their origins, AI does not enable success: it amplifies risk.

An example is a recent study by Fridolfsson et al (1) which evaluated the performance of 3 leading LLMs (ChatGPT-4o, Claude 3.5 Sonnet, and Gemini 1.5 Pro) in estimating food weight, energy content, and macronutrient composition from standardised food photographs. Systematic underestimation of large portions and high variability in macronutrient estimation (48-66% error) indicate these general-purpose LLMs are not yet suitable for dietary assessment in clinical or athletic populations where accurate quantification is critical.

Professionals won’t be replaced by AI itself. But they will replaced by professionals who know how to use AI effectively.

This is where the role of the professional is extremely important. The practitioner becomes the translator of technology, the validator of evidence, the filter between algorithm and athlete. AI becomes a tool, not a decision-maker.

The practitioner's new role

Across disciplines, AI is beginning to reshape how time is spent, not by replacing expertise, but by shifting where that expertise is applied. Today, AI can summarise evidence, retrieve information, and automate some of the background work that once consumed practitioners’ attention. But it cannot yet calculate individual energy balance or carbohydrate needs with sufficient accuracy, at least without the right human inputs.

Meaningful nutrition planning still depends on contextual understanding of training load, body composition, environment, and behavioural factors that remain stubbornly human.

In theory, automation should free practitioners to spend more time on interpretation, critical thinking, and athlete engagement. In practice, the opposite often occurs: as systems become faster, people become less critical of their outputs. Convenience can replace reflection if vigilance is lost.

AI should accelerate thinking, not replace it. The professionals who will thrive are those who stay critical, using AI to amplify, not automate, their judgement.

AI does not reduce the scope of professional practice; it tests its depth. The challenge is to use automation to gain time for better reasoning, not to outsource reasoning itself.

A future defined by collaboration, not competition

The most compelling vision of the future is not one in which AI replaces practitioners, but one in which practitioners use AI as a tool to deliver the version of support athletes have always deserved: insight that is timely, individualised, and grounded in both science and humanity.

In that future:

AI detects patterns -> humans interpret these patterns.

AI receives evidence -> humans judge the quality and weighting of the evidence.

AI handles scale -> humans provide meaning and context.

Summary

The question “Will AI replace sports practitioners?” reflects a narrow view of practice that reduces expertise to tasks. AI can automate tasks, and it should. It is unrealistic and unnecessary for performance professionals to spend hours every day on scheduling, data extraction, or repetitive research summaries. Those responsibilities are now better handled by machines.

But AI will not replace the capacity to understand an athlete, to navigate uncertainty, to balance physiology with psychology, or to transform data into action an athlete is willing to take. The dietitian, coaches and sport scientists of the future will not be defined by what they protect from automation, but by what they choose to do with the time that automation creates.

References

Fridolfsson, Jonatan et al. Performance Evaluation of 3 Large Language Models for Nutritional Content Estimation from Food Images. Current Developments in Nutrition, Volume 9, Issue 10, 107556