When AI gets health questions wrong

- Asker Jeukendrup

- 3 hours ago

- 5 min read

A new BMJ Open paper highlights an important problem: fluent answers are not always accurate answers. People are turning to chatbots like ChatGPT and Gemini to get health advice, athletes and practitioners use it to get nutrition advice or updates… or performance advice. But how reliable are these chatbots when the topic is health, nutrition or performance?

I was fortunate to be part of a group of established researchers that aimed to address exactly that question. In a study we audited five popular AI chatbots and examined how they responded to questions in health and medical areas that are particularly vulnerable to misinformation. The initiative was led by Dr Nick Tiller, and the paper was just published in BMJ Open. Here is the LINK to the Open Access paper.

The findings were clear and sobering. Performance was often poor. References were frequently unreliable. And the combination of confident language with weak evidence creates a real risk when these tools are used uncritically.

What the study investigated

The paper, “Generative artificial intelligence-driven chatbots and medical misinformation: an accuracy, referencing and readability audit,” evaluated five public-facing chatbots: Gemini, DeepSeek, Meta AI, ChatGPT and Grok. The models were tested in February 2025 using 50 prompts covering five categories: cancer, vaccines, stem cells, nutrition and athletic performance. The prompts included both closed-ended and open-ended questions, and they were deliberately designed to push the models toward common misinformation themes or contraindicated advice.

That design matters. In the real world, people do not always ask clean, well-structured questions. They ask questions shaped by confusion, bias, fear, headlines, social media, and prior beliefs. So if we want to understand the real-world risk of chatbots in health, we have to test them under pressure, not only in ideal scenarios. The study did that.

The responses were rated by subject experts. The researchers also assessed citation accuracy and completeness, and they measured readability using standard readability scoring.

The main findings

The headline result is difficult to ignore. Nearly half of all chatbot responses were rated as problematic. Specifically, 30% were classified as somewhat problematic and 19.6% as highly problematic. So this was not a case of occasional minor errors. Roughly one in five responses was considered highly problematic.

Performance also differed by category. The chatbots did relatively better in vaccines and cancer. They did worse in stem cells, athletic performance and nutrition. For readers of MySportScience, that point is especially relevant. Two of the weakest domains were directly related to performance and nutrition, exactly the kind of fields where practitioners and athletes may increasingly turn to AI for support.

There was another important pattern. Closed-ended questions produced fewer highly problematic responses. Open-ended questions produced far more. That makes sense. The more freedom a model has to generate, the more room there is for speculation, hedging, false balance and unsupported advice.

The study also showed how rarely chatbots chose restraint. Across 250 total questions, there were only two refusals to answer, both from Meta AI. In other words, these systems usually gave an answer even when caution, deferral or refusal might have been the safer response.

Why the referencing data are so important

One of the most revealing parts of the paper was the citation audit. When asked to provide scientific references for their answers, the chatbots often returned incomplete, inaccurate or fabricated citations. Across the study, no chatbot produced a fully complete and accurate reference list for any prompt. The median completeness score was just 40%. Even the better-performing models were far from reliable.

This matters because references create trust. A reader sees author names, journal titles and article titles and assumes the answer is evidence-based. But if those references are wrong, incomplete or partly invented, the answer only looks scientific. It is credibility without verification. That is a key practical lesson. A chatbot does not become trustworthy simply because it provides references. In some cases, the references themselves need as much scrutiny as the main answer.

Readability was also a problem

The paper did not only look at accuracy. It also looked at readability. On average, all models produced responses rated as “Difficult,” roughly equivalent to college-level reading. That is far from ideal for public-facing health information.

This creates an awkward and potentially risky combination. The answers sound polished. They sound confident. They may even look scientific. But they are often too complex for the general public, and in many cases not reliable enough to justify the confidence with which they are presented.

What this means for sport and sports nutrition

This is where the paper connects directly to the broader AI discussion we have already been having on MySportScience.

In Artificial intelligence (AI) in sport, the focus was on what AI is, how it works, and where it fits into high-performance environments. That article made an important point: AI is already influencing recruitment, training planning, tactical decision-making and support systems in elite sport. It is not a future concept. It is already here.

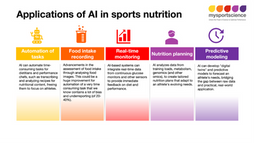

In Artificial intelligence (AI) in sports nutrition, the emphasis was even closer to daily practice. Sports nutritionists, dietitians and athletes now interact with AI in many forms, often without even thinking about it. Readiness scores, automated feedback, recovery summaries and data interpretation are already part of routine workflows. But that article also stressed something essential: the professionals who will benefit most are not those who reject AI, and not those who trust it blindly, but those who understand where it is reliable and where it is not.

This new BMJ Open paper supports exactly that message. AI can be helpful, but only when its limitations are understood. In fields such as nutrition and athletic performance, the risks are not theoretical. These are areas already saturated with commercial claims, oversimplified messaging and pseudoscience. If a chatbot is trained on mixed-quality information and then presents it with authority, the result can look much stronger than it really is.

That is also why the MySportScience blog Will artificial intelligence (AI) replace sports practitioners? is so relevant here. The real issue is not replacement. The real issue is judgement. AI may help organise, summarise and accelerate, but evidence appraisal, context, ethics and decision-making still depend heavily on knowledgeable practitioners. This paper is a good reminder that human expertise is not a luxury added at the end. It is central to safe and effective use.

The bigger implication

The most important conclusion of the paper is also the simplest: without public education and oversight, there is a risk that AI amplifies misinformation rather than reducing it.

Tools matter. But so do the data they are trained on, the way they are deployed, the safeguards they include, and the knowledge of the person using them. In health, medicine, sport and sports nutrition, fluent language should never be confused with understanding. A polished answer can still be wrong. A confident answer can still be misleading. And a citation list can still be fabricated.

Practical take-home message

So where does this leave us? AI can be useful for support tasks. It can help structure information, speed up routine work and generate first drafts. But when the task is evidence interpretation, health guidance or decision-making in complex domains, caution is essential.

For practitioners, the lesson is straightforward:verify claims, check references, challenge confident answers, and do not confuse readability or fluency with quality.

For the public, the lesson is equally important:chatbots may be convenient, but convenience is not the same as reliability and doesnt mean you can always trust it.

And for all of us working in evidence-based sport and sports nutrition, this paper is a timely reminder that good practice still depends on critical thinking, deeper knowledge and professional judgement.

Reference

Tiller , Nicholas B, Alessandro R Marcon, Marco Zenone, Kristin E Kidd, Asker E Jeukendrup, Zubin Master, Timothy Caulfield. Generative artificial intelligence-driven chatbots and medical misinformation: an accuracy, referencing and readability audit BMJ Open 2026;16:e112695. doi: 10.1136/bmjopen-2025-112695

Related reading and videos